Introduction

When a new Kubernetes cluster is created, there is no isolation between pods running on that cluster. All pods are allowed to communicate with each other and the outside. To control traffic between the different workloads and external services, Kubernetes provides the NetworkPolicy objects. Network Policies are native Kubernetes objects to define these isolation rules.

Why Network Policies?

One way of viewing a Kubernetes cluster is a platform that is capable of hosting different heterogeneous workloads and managing them on this same platform. These workloads may be developed by different teams from the same organization, or even by a third party from outside the organization.

In these cases, traffic isolation between workloads becomes a must from a security perspective. This may even be a compliance requirement for some industries.

Network Policies are implemented by the CNI Plugin installed on the cluster. To be able to use these Network Policies the CNI plugin should support them. Not all CNI plugins support Network Policies.

What about Amazon EKS clusters?

Until recently, the official/default CNI that comes with EKS clusters (VPC CNI) did not support Network Policies. Anyone who wanted to use Network Policies had to Install another third-party plugin such as Calico to be able to make use of Network Policies.

Since 31st August 2023, the new AWS VPC CNI release (v1.14.0) added support for Kubernetes Network Policies, allowing it to support this feature natively without requiring any additional third-party plugins.

Amazon EKS adopted eBPF to implement the Network Policies capabilities on the CNI plug-in. eBPF is now a standard for packet filtering. Unlike iptables, eBPF executes custom code directly within the kernel, which makes it very efficient.

This new VPC CNI release adds a ‘node agent’ that runs as a container that belongs to the ‘aws-node’ DaemonSet. This node agent is responsible for maintaining eBPF programs.

If the Network Policy feature is enabled for the VPC CNI Add-on, a Network Policy Controller will be launched and will actively monitor new Network Policies creations. The Network Policy Controller will then instruct the node-agent to create the rules by updating eBPF programs

Enabling Network Policies on the VPC CNI

We will be using Terraform to create a new EKS cluster and configure the VPC CNI to enable Network Policy support. We will create an EKS cluster with 1.27 version (1.25 and above supports Network Policies), and we will enable Network Policies support in the add-on configuration. We will be using the repo eks-cluster to create the cluster.

The important part of the cluster.tf manifest is the vpc-cni cluster addon configuration:

cluster_addons = {

vpc-cni = {

most_recent = true

before_compute = true

service_account_role_arn = module.vpc_cni_irsa.iam_role_arn

configuration_values = jsonencode({

enableNetworkPolicy = "true"

})

}

}

We configure the vpc-cni addon to use the most recent version, and in the configuration_values we enable support for Network Policy.

The service_account_role_arn is the ARN of the IAM role created in the same manifest, and that will be used to annotate the VPC CNI Service Account. This will allow setting IRSA for the DaemonSet instead of relying on the IAM Role attached to the EC2 instance.

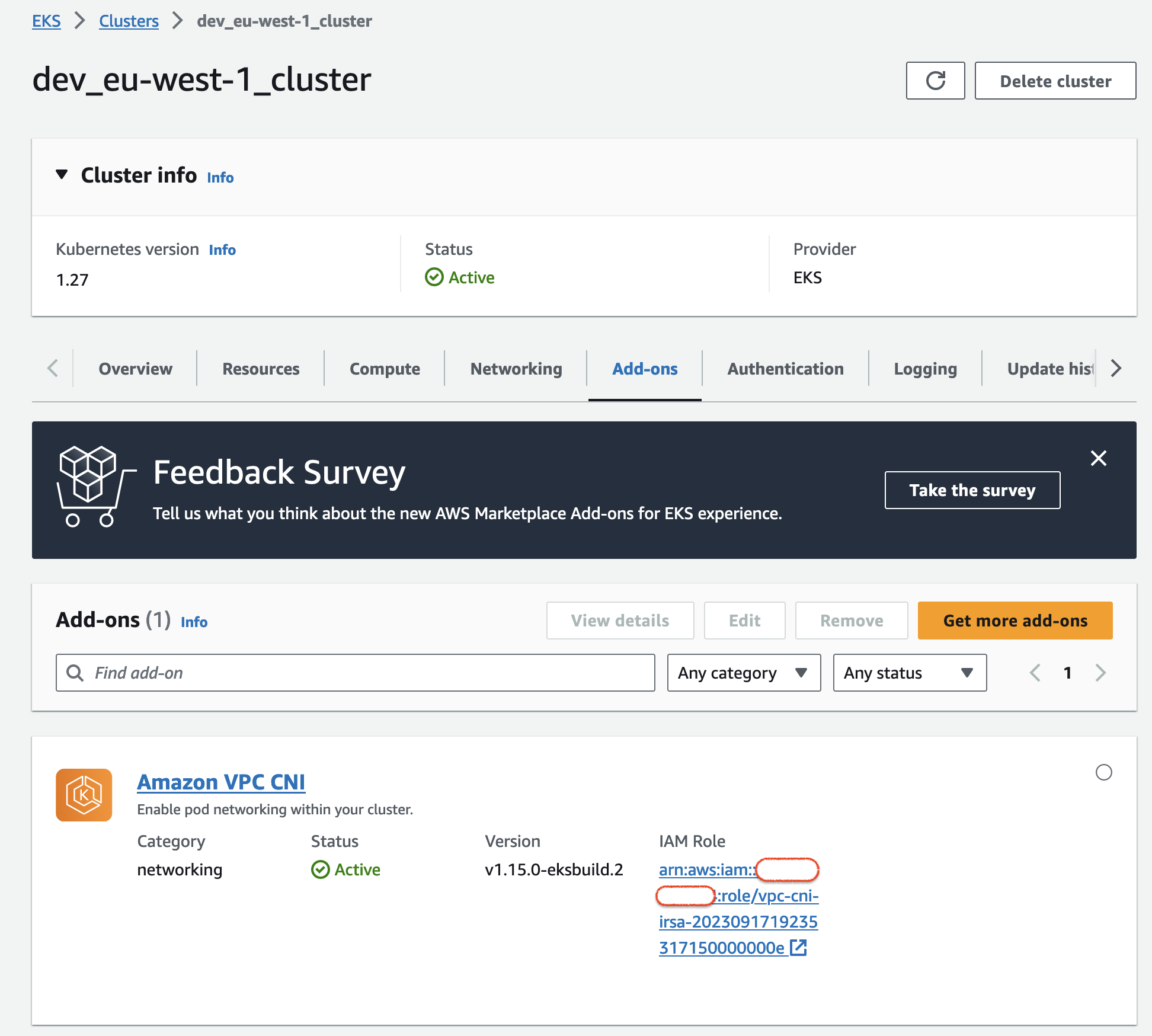

Once the cluster is created, we can see on the console that the add-on VPC CNI was created and is active, with version v1.15.0:

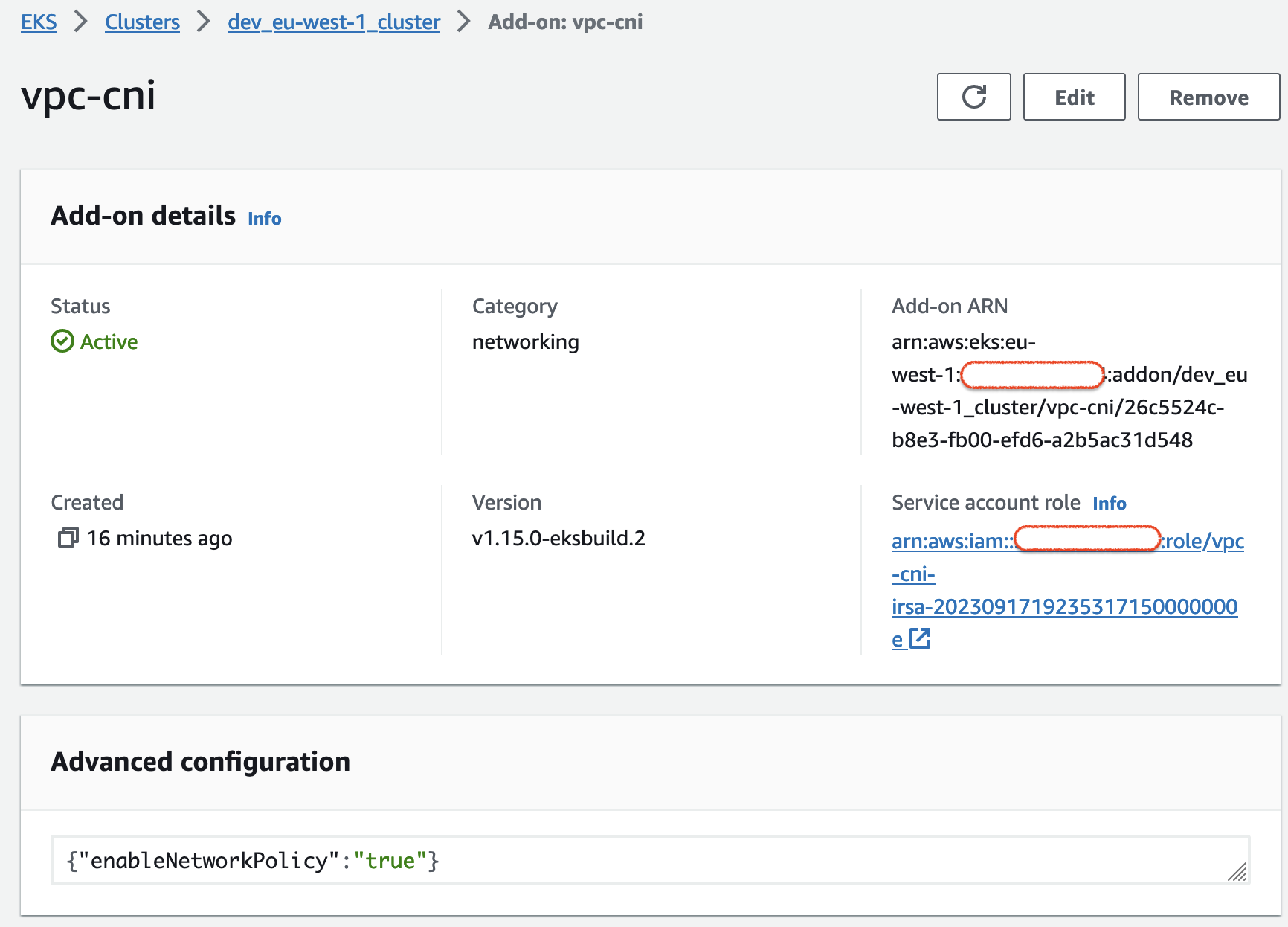

If we click to view the details, we can see the

If we click to view the details, we can see the enableNetworkPolicy set to true on the advanced configuration:

Let’s now create 2 namespaces for testing: frontend and backend.

kubectl create ns frontend

kubectl create ns backend

And create an nginx deployment and service on each of these namespaces

kubectl -n frontend create deployment nginx --image=nginx

kubectl -n frontend expose deployment/nginx --type="ClusterIP" --port 80

kubectl -n backend create deployment nginx --image=nginx

kubectl -n backend expose deployment/nginx --type="ClusterIP" --port 80

At this stage, we have two namespaces with an nginx service each. Given that by default all pods can communicate with each other, we can see that from each of these pods we can communicate with the other.

Requesting the backend from the frontend:

FRONTEND_POD=$(kubectl -n frontend get po -l app=nginx -o jsonpath='{.items[0].metadata.name}')

kubectl -n frontend exec -ti ${FRONTEND_POD} -- curl --max-time 5 nginx.backend.svc.cluster.local

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

html { color-scheme: light dark; }

body { width: 35em; margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif; }

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

Let’s now create a default deny policy on the backend namespace to deny access to the backend services.

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: default-deny-ingress

namespace: backend

spec:

podSelector: {}

policyTypes:

- Ingress

kubectl apply -f netpol-deny-all.yaml

networkpolicy.networking.k8s.io/default-deny-ingress created

Once this policy applied the frontend can no longer request the backend:

kubectl -n frontend exec -ti ${FRONTEND_POD} -- curl --max-time 5 nginx.backend.svc.cluster.local

curl: (28) Connection timed out after 5001 milliseconds

command terminated with exit code 28

Let’s now create a new network policy to allow nginx pods from the frontend namespace to request the backend:

kind: NetworkPolicy

apiVersion: networking.k8s.io/v1

metadata:

name: netpol-allow-nginx-front

namespace: backend

spec:

podSelector: {}

ingress:

- from:

- podSelector:

matchLabels:

app: nginx

namespaceSelector:

matchLabels:

kubernetes.io/metadata.name: frontend

kubectl apply -f netpol-allow-nginx-front.yaml

networkpolicy.networking.k8s.io/netpol-allow-nginx-front created

After applying this policy, the frontend nginx pod can again request the backend.

Summary

It is no longer required to install a third-party plugin to use Network Policies. They are natively supported via the VPC CNI add-on (for EKS 1.25 and above).

The fact that it is based on eBPF makes it very efficient performance-wise.

Finally, being the default and native solution on an EKS cluster, the VPC CNI can be a no-brainer choice in most cases.